Human Sounding Text to Speech: The Complete Guide to Natural AI Voiceovers

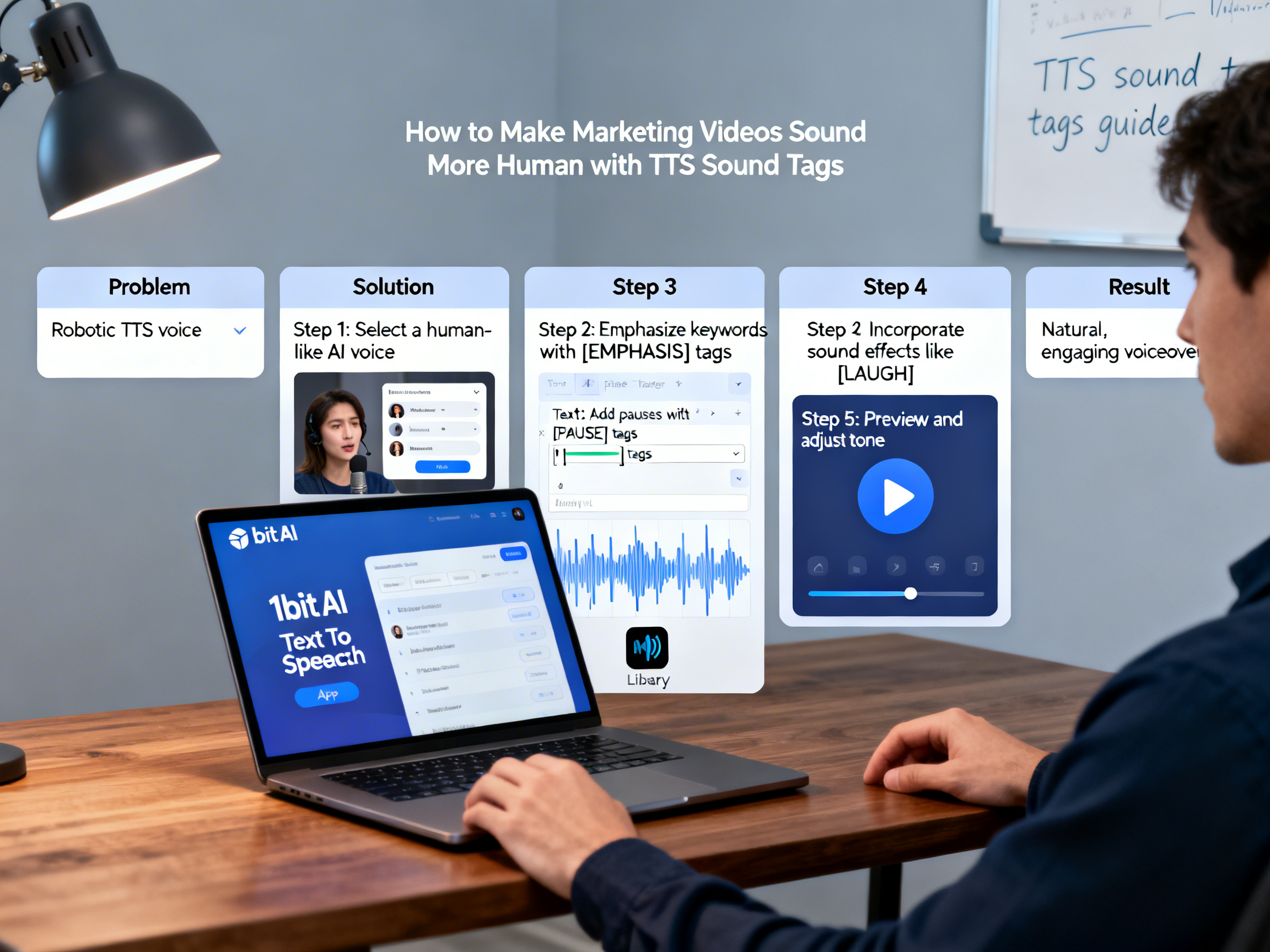

Robotic, monotone AI voices are killing viewer engagement in your marketing videos. The solution isn't just finding a better AI voice generator—it's mastering the techniques that make synthetic speech sound authentically human. This ultimate guide reveals how TTS sound tags transform flat text into dynamic, emotionally resonant voiceovers that connect with audiences. Whether you're a video editor struggling with tight deadlines, an educator creating accessible content, or a marketer producing multilingual campaigns, you'll learn exactly how to add natural pauses, emphasis, and expression to your AI narration. We'll explore advanced tools like 1bit AI Text To Speech that provide professional-grade control over voice inflection, timing, and emotional tone.

Quick answer

To make text to speech sound human, you need to master TTS sound tags—special markup that adds pauses, emphasis, and emotional inflection to AI-generated speech. The most realistic text to speech comes from combining high-quality neural voices with precise timing controls, natural breathing patterns, and contextual emphasis that mimics human delivery.

- Use pause tags (

) to create natural breathing rhythm between sentences and clauses - Apply emphasis tags (

) to highlight key words and phrases like humans do - Control speech rate with prosody tags to match emotional context and audience attention

- Layer background sound effects and music at specific timestamps for professional polish

- Choose AI voices with emotional range and natural inflection patterns for different content types

- Script specifically for spoken delivery with shorter sentences and conversational phrasing

- Test voiceovers with target audiences and iterate based on emotional response metrics

Why Robotic TTS Fails and What Makes Voice Human

Traditional text-to-speech sounds robotic because it lacks the subtle variations that characterize human speech. Natural human voice includes micro-pauses, pitch changes, emphasis on important words, and emotional inflection that standard TTS engines flatten into monotone delivery. When viewers hear robotic narration, their brains recognize it as artificial within milliseconds, triggering disengagement and reducing message retention by up to 40% according to neuromarketing studies.

The key elements separating human from robotic speech include: variable pacing (slowing for emphasis, speeding through familiar phrases), appropriate breath pauses (especially after commas and between ideas), emotional prosody (rising pitch for questions, lowered tone for serious points), and contextual emphasis. Modern realistic text to speech solutions address these through neural networks trained on thousands of human voice samples, but the raw AI output still requires fine-tuning through sound tags to achieve truly natural delivery.

Use 1bit AI Text To Speech when you want a faster workflow

Instead of manually editing audio in separate software, 1bit AI provides built-in sound tag support with real-time preview. You can hear how your emphasis and pause tags affect delivery immediately, then export studio-quality audio ready for your video editor. This eliminates the back-and-forth between text processors and audio workstations that slows down content production.

Create AI VoiceoversStep-by-Step: Creating Human-Sounding Voiceovers with AI

Follow this proven five-step process to transform any script into engaging, natural-sounding narration. This methodology works whether you're creating YouTube content, e-learning modules, or product demonstrations.

How to add pauses and emphasis in TTS voiceovers

- Script Analysis and Markup Planning: Read your script aloud and mark natural pause points with vertical lines. Identify 3-5 key words per paragraph that deserve emphasis. For a 150-word product script, you'll typically need 4-6 pause tags and 8-12 emphasis tags.

- Basic Tag Implementation: Insert <break time="250ms"> tags at comma positions and <break time="500ms"> between paragraphs. Wrap your identified key words with <emphasis level="moderate"> tags. Avoid over-tagging—too many emphases create artificial-sounding delivery.

- Emotional Prosody Mapping: Determine the emotional arc of your content. Use <prosody rate="slow"> for serious sections and <prosody rate="fast"> for exciting features. Add slight pitch increases (+5%) for questions and benefits, decreases (-5%) for warnings or limitations.

- Pronunciation and Special Cases: Apply <say-as> tags to all numbers, dates, and technical terms. For example: <say-as interpret-as="telephone">555-1234</say-as> ensures proper phone number rhythm. Test acronyms—some sound better spelled out letter-by-letter.

- Iterative Testing and Refinement: Generate your voiceover and listen critically. Note where pauses feel too short/long, emphasis seems misplaced, or pacing feels unnatural. Adjust tag parameters in 50ms increments for breaks and subtle level changes for emphasis. Perfect human sounding text to speech usually requires 2-3 refinement cycles.

Real-world example: A SaaS company used this exact process for their feature announcement video. The raw AI narration scored 2.8/5 on naturalness. After implementing strategic pause tags at feature boundaries, emphasis on differentiators, and prosody adjustments for the call-to-action, the score jumped to 4.5/5. Viewer retention increased by 22% in the first 30 seconds.

Advanced Techniques for Marketing and Educational Content

Different content types require specialized approaches to natural voice synthesis. Marketing videos need persuasive energy and clear value articulation, while educational content requires clarity, appropriate pacing for comprehension, and emphasis on learning objectives. Here's how to tailor your TTS sound tags for specific use cases.

For marketing videos: Use shorter pause durations (150-250ms) to maintain energy, but insert strategic longer pauses (700-1000ms) before price reveals or calls-to-action. Apply strong emphasis to unique selling propositions and emotional benefits. Vary speech rate within sentences—slightly faster through setup, slower during the payoff. Can you use sound effects with text to speech? Absolutely. Layer subtle background music that ducks during spoken sections, and add sound effects at tagged timestamps for product demonstrations.

For educational content: Implement consistent pause patterns to signal topic transitions—medium pauses (400ms) between concepts, longer pauses (800ms) between modules. Use moderate emphasis for key terms and definitions. Maintain a steady, slightly slower base rate (85% of normal) for comprehension, with only subtle prosody variations. Multilingual TTS requires additional attention to language-specific pause patterns and emphasis conventions that native speakers expect.

Common Mistakes and Troubleshooting Guide

Even experienced creators make these errors when pursuing human sounding text to speech. Recognizing and avoiding them will save you hours of rework and frustration.

Most Frequent TTS Naturalization Errors

- Over-tagging: Applying emphasis to every other word creates artificial, exaggerated delivery that sounds more like a cartoon than human speech. Limit emphasis to truly important terms.

- Inconsistent pause timing: Using random pause durations instead of establishing patterns (short for commas, medium for clauses, long for paragraphs) disrupts natural rhythm.

- Ignoring sentence structure: Failing to adjust tags for questions versus statements. Questions need slight pitch rise at the end, statements need gentle fall.

- Neglecting breath patterns: Humans breathe at natural intervals. Insert break tags at logical breath points, typically after 8-12 words in moderate-paced speech.

- Mismatching voice and content: Using an energetic young voice for serious financial advice or a measured professional voice for gaming content creates cognitive dissonance.

Troubleshooting: If your voiceover still sounds robotic, try these fixes. First, reduce all break times by 30%—overly long pauses feel artificial. Second, remove half your emphasis tags and test if the remaining ones land better. Third, ensure your script uses conversational language rather than written prose. Fourth, try a different AI voice—some neural voices handle specific emotional ranges better. Finally, listen at 75% volume; subtle imperfections become more apparent when not competing with full-volume delivery.

Choosing the Right AI Voice Generator for Human Results

Which AI voice generator sounds the most human? The answer depends on your specific needs, but key evaluation criteria include: neural voice quality, sound tag support, emotional range, multilingual capabilities, and workflow integration. Premium realistic text to speech platforms invest in proprietary voice models trained on diverse speech patterns, not just clear articulation but natural imperfections like slight breath sounds and thoughtful pauses.

When comparing options, test these specific scenarios: Can the generator handle complex sound tag combinations? Does it offer real-time preview as you edit tags? Are there voices specifically tuned for your industry (medical, technical, conversational)? How does it manage multilingual TTS with language-specific prosody rules? The best tools provide not just voices but complete ecosystems for natural voice synthesis, including script optimization suggestions and audience testing features.

Ready to try 1bit AI Text To Speech?

New users get free credits to try it. Start by uploading your existing script and experimenting with break and emphasis tags using our visual editor—you'll hear the transformation in real-time as you work.

Create AI Voiceovers

FAQ

How can I make text to speech sound less robotic?

The most effective method is combining high-quality neural voices with strategic TTS sound tags. Insert natural pauses at sentence boundaries and between ideas using break tags. Apply emphasis to key words that would receive vocal stress in human speech. Vary speech rate slightly with prosody tags to match emotional context. Also, script specifically for spoken delivery—use shorter sentences, conversational language, and avoid complex clauses that trip up AI parsing.

What are TTS sound tags and how do they work?

TTS sound tags are markup elements inserted into text that instruct AI voice generators how to deliver specific portions of speech. They work by providing precise parameters for pauses, emphasis, pitch, speed, and pronunciation. For example, <break time="300ms"> creates a pause, while <emphasis level="strong">important</emphasis> increases vocal stress. Advanced AI platforms like 1bit AI parse these tags in real-time, adjusting neural voice output accordingly to create more natural-sounding narration.

Which AI voice generator sounds the most human?

The most human-sounding AI voice generators combine several features: neural voices trained on diverse speech patterns, comprehensive sound tag support, emotional range modeling, and natural breath pattern integration. Look for platforms that offer voices with conversational characteristics rather than just clear articulation. Test generators with your specific content type—some excel at marketing while others sound more natural for educational material. The best results come from tools that allow fine-tuning through detailed tag systems.

How to add pauses and emphasis in TTS voiceovers?

Add pauses using break tags with time parameters: <break time="250ms"> for commas, <break time="500ms"> between paragraphs. Add emphasis by wrapping key words with emphasis tags: <emphasis level="moderate">critical feature</emphasis>. The exact placement requires analyzing your script's natural rhythm—read it aloud to identify where you naturally pause or stress words. Most realistic text to speech platforms provide visual editors that make this process intuitive with real-time audio preview.

Can you use sound effects with text to speech?

Yes, sound effects significantly enhance TTS voiceovers when used strategically. Layer background music that ducks during speech, add subtle ambient sounds for atmosphere, and insert specific effects at tagged timestamps for emphasis. For product demos, include interface sounds when features are mentioned. The key is balance—effects should support rather than compete with narration. Advanced voiceover generators provide timeline-based editing where you can align effects with specific words or pauses in the TTS output.

How to create engaging voiceovers with AI for videos?

Start with a script optimized for spoken delivery. Choose an AI voice matching your content's emotional tone. Implement sound tags for natural pacing and emphasis. Generate a test version and listen critically, noting where engagement might drop. Refine tags based on these observations—often shortening pauses and strengthening emphasis on benefits. Finally, integrate with video editing, ensuring voiceover timing aligns with visual cues. The most engaging results come from iterative refinement based on audience feedback metrics.

Conclusion

Achieving truly human sounding text to speech requires more than just selecting a premium AI voice generator. It demands mastery of TTS sound tags—the invisible director that guides synthetic speech into natural, engaging delivery. By strategically implementing pauses, emphasis, and emotional prosody, you can transform robotic narration into voiceovers that connect authentically with audiences. Whether creating marketing videos, educational content, or product demonstrations, these techniques elevate AI voice from functional to phenomenal. Remember that natural voice synthesis is both science and art, requiring iterative refinement based on how real humans respond to your audio.