TTS API Best Practices: The Complete Guide to Natural-Sounding Voiceovers

Creating truly natural-sounding AI voiceovers requires more than just converting text to speech. This ultimate guide reveals the professional techniques developers and content creators use to make TTS API output indistinguishable from human narration. You'll learn how to master SSML tags for precise pauses, inject authentic emotions into synthetic voices, control pacing for different content types, and implement multilingual narration that sounds native. Whether you're building a video editing tool, educational platform, or marketing automation system, these TTS API best practices will transform your voiceover quality. We'll demonstrate how 1bit AI Text To Speech implements these techniques through its developer-friendly API.

Quick answer

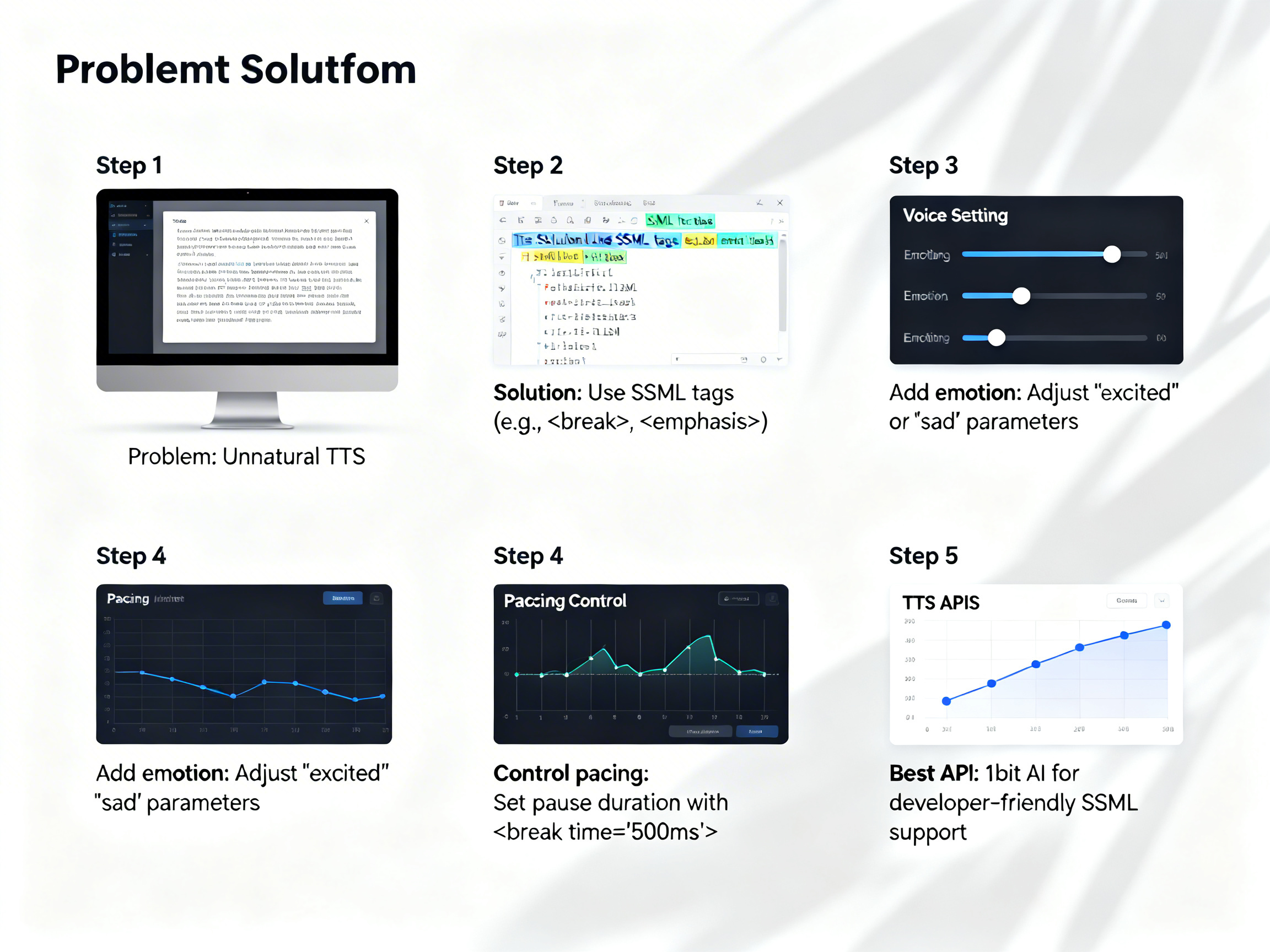

The most effective TTS API best practices combine SSML tags for structural control, emotional parameters for vocal expression, and strategic pauses for natural pacing. To make TTS sound more natural, you need to implement prosody tags for emphasis, break tags for breathing room, and emotional context markers that guide the AI's delivery.

- Use SSML break tags with duration values (200ms-1000ms) to create natural pauses between sentences and paragraphs

- Implement prosody tags to control rate, pitch, and volume for emphasis and emotional expression

- Add emotional context markers (like "excited" or "serious") before key passages to guide AI interpretation

- Structure text with proper punctuation and paragraph breaks before API submission

- Test different voice profiles for specific content types (narration vs. dialogue vs. announcements)

- Use phoneme tags for correct pronunciation of technical terms and proper nouns

- Implement batch processing with varied pacing for long-form content to maintain listener engagement

Mastering SSML Tags for Realistic Text to Speech API Output

Speech Synthesis Markup Language (SSML) is the foundation of professional TTS API best practices. Unlike plain text input, SSML gives you surgical control over how the AI interprets and delivers your content. The most effective realistic text to speech API implementations use a combination of structural, prosodic, and phonetic tags.

Break tags are your primary tool for natural pacing. While commas and periods provide basic pauses, SSML break tags with explicit duration values (like 500ms or 1s) create breathing room that mimics human speech patterns. For dialogue scenes, use shorter breaks (200-400ms) between speaker turns. For educational content, longer breaks (700-1000ms) between complex concepts help comprehension. Prosody tags control the musicality of speech—rate for speed, pitch for tone, and volume for emphasis. A 10% rate reduction with 15% pitch increase can make technical explanations clearer.

Phoneme tags are essential for brand names, technical terms, and multilingual content. Instead of relying on the AI's default pronunciation dictionary, you can specify exact phonetic spellings using the International Phonetic Alphabet. Say tags let you insert verbal instructions to the AI (like "whisper" or "with excitement") that aren't spoken aloud but affect delivery. When combined strategically, these tags transform robotic output into engaging narration.

Use 1bit AI Text To Speech when you want a faster workflow

1bit AI's TTS API includes intelligent SSML parsing that automatically optimizes tag combinations for natural output. Our system suggests appropriate break durations based on content type and applies emotional context from surrounding tags. This reduces the trial-and-error typically required with raw SSML implementation. New users get free credits to experience this streamlined approach.

Create AI VoiceoversHow to Add Emotion to AI Voice Generator APIs

Emotional expression separates amateur TTS output from professional voiceovers. Modern AI voice generator APIs support emotional parameters through SSML extensions, dedicated emotion tags, or voice profile selections. The key is understanding which emotional dimensions each API supports and how to apply them contextually.

Start by mapping emotions to content types: excitement for product demos, empathy for customer service, authority for training materials, and warmth for storytelling. Most APIs support a core set of 5-8 emotions (happy, sad, angry, fearful, surprised, disgusted, neutral). Advanced systems like 1bit AI add nuanced variations like "confident," "empathetic," "enthusiastic," and "serious." Implement emotional tags at the paragraph or sentence level—applying a single emotion to an entire 5-minute narration creates unnatural monotony.

Combine emotional tags with prosody adjustments for maximum effect. An "excited" emotion paired with a 20% rate increase and 10% pitch elevation creates authentic enthusiasm. A "serious" emotion with a 15% rate decrease and neutral pitch conveys authority. Test emotional transitions—how the AI moves from "neutral" to "surprised" should sound natural, not abrupt. For dialogue, assign different emotional profiles to different speakers using speaker tags or separate API calls.

Advanced Pacing Control in Voiceover API Integration

Pacing is the rhythmic foundation of natural speech, and controlling it effectively is one of the most valuable TTS API best practices. Professional voiceover API integration requires understanding three pacing dimensions: micro-pauses (between words), meso-pauses (between phrases), and macro-pauses (between paragraphs or sections).

Micro-pacing adjustments solve the "machine gun" effect where words blur together. Use prosody rate tags at the word level for emphasis—slowing specific terms by 30% makes them stand out. Meso-pacing uses SSML break tags with graduated durations: 100ms after commas, 300ms after periods, 500ms after paragraphs. Macro-pacing requires content analysis—technical explanations need 20% slower baseline rates than narrative content. Implement adaptive pacing that speeds up during less important passages and slows for key points.

For different content types: eLearning needs consistent pacing with clear pauses between concepts (average 150 words/minute). Podcasts benefit from variable pacing with conversational speed changes (160-180 wpm). Video voiceovers should match visual timing—use timestamp-based break tags synchronized with scene changes. Commercials require dramatic pacing shifts: fast for benefits, slow for calls-to-action. Always test pacing with your target audience—what sounds natural to developers might feel rushed to end-users.

Ready to try 1bit AI Text To Speech?

New users get free credits to try it. Our API includes intelligent pacing algorithms that automatically adjust based on content analysis—just upload your script and hear the difference. Start by converting a 500-word blog post to experience adaptive pacing in action.

Create AI VoiceoversMultilingual TTS API Implementation Strategies

True multilingual TTS API implementation goes beyond supporting multiple languages—it requires understanding linguistic nuances, cultural speech patterns, and locale-specific pronunciations. The best multilingual text to speech systems handle code-switching (mixing languages within sentences), proper noun preservation, and locale-appropriate emotional expression.

Start with language detection at the paragraph or sentence level—don't force a single voice to handle multiple languages. Use the lang SSML attribute explicitly even when the API auto-detects. For mixed-language content, implement boundary markers that signal language changes to the AI. Pay attention to phonetic differences: Spanish "j" sounds different in Spain vs. Mexico, French vowels vary between Parisian and Canadian dialects.

Cultural pacing matters—Japanese narration typically uses shorter pauses than German. Emotional expression varies culturally: excitement levels appropriate for American English might sound exaggerated in British English. Implement locale-specific voice profiles: use Mexican Spanish voices for Latin American audiences, Castilian Spanish for Spain. For proper nouns and technical terms, create pronunciation dictionaries per language. Test with native speakers for each target language—automated quality metrics often miss subtle unnaturalness.

Text to Speech API Tutorial: Step-by-Step Integration

This practical text to speech API tutorial walks through implementing professional-grade TTS with emotional control and natural pacing. We'll use a Python example, but the concepts apply to any programming language.

Step 1: API Setup and Authentication

Obtain your API key from your TTS provider. For 1bit AI, this is in your dashboard. Store it securely using environment variables. Install necessary SDKs—most providers offer Python, JavaScript, and other language libraries. Test authentication with a simple status check before proceeding to synthesis.

Step 2: Basic Text Synthesis

Convert plain text first to establish baseline quality. Note where the AI mispronounces terms or creates unnatural pacing. This identifies areas needing SSML intervention. Save the output as your "before" sample for comparison.

Step 3: SSML Implementation

Wrap your text in SSML root tags. Add break tags at paragraph boundaries (500ms) and sentence ends (300ms). Insert prosody tags for emphasis on key terms—reduce rate by 25% and increase volume by 10%. Use phoneme tags for problematic pronunciations.

Step 4: Emotional Layer Integration

Add emotional context markers using your API's specific syntax. For 1bit AI, use emotion tags at paragraph starts. Match emotions to content—"excited" for benefits, "serious" for warnings, "neutral" for factual sections. Test emotional transitions between paragraphs.

Step 5: Batch Processing and Optimization

For long content, split into logical chunks (300-500 words each). Process chunks with varied pacing to maintain listener engagement. Implement caching for frequently used phrases. Add error handling for network issues and quota limits.

Common TTS API Mistakes and Troubleshooting

Even experienced developers make these TTS API mistakes. Recognizing and avoiding them will significantly improve your voiceover quality and reduce debugging time.

| Mistake | Symptoms | Solution |

|---|---|---|

| Overusing SSML breaks | Speech sounds robotic with unnatural pauses, losing flow | Use breaks only at sentence/paragraph ends; vary durations (200-800ms) |

| Emotional inconsistency | Voice shifts tone abruptly between sentences | Apply emotions at paragraph level; use neutral transitions between emotions |

| Ignoring rate limits | API calls fail intermittently; quota exceeded errors | Implement exponential backoff; cache frequent phrases; monitor usage |

| Poor text preprocessing | Mispronounced acronyms, dates read incorrectly | Expand abbreviations ("Feb" → "February"); format numbers as words |

| Single-voice multilingual | Accented pronunciation in non-native languages | Use dedicated voices per language; implement language detection |

For troubleshooting: if speech sounds monotone, add prosody variations. If pacing feels rushed, reduce baseline rate by 15%. If emotional tags aren't working, check your API's supported emotion list—not all systems support the same labels. For pronunciation issues, use IPA phoneme tags instead of spelling approximations.

Which TTS API is Best for Developers? Comparison Guide

Choosing the right TTS API depends on your specific needs. This comparison focuses on developer experience, feature completeness, and naturalness—key factors in TTS API best practices implementation.

| Feature | 1bit AI TTS API | Standard Cloud APIs | Basic TTS Services |

|---|---|---|---|

| SSML Support | Full SSML 1.0 + emotional extensions | Basic SSML (break, prosody, say-as) | Limited or no SSML |

| Emotional Control | 12 nuanced emotions with smooth transitions | 5-8 basic emotions | Neutral only |

| Pacing Intelligence | Content-aware adaptive pacing | Manual rate control only | Fixed pacing |

| Multilingual Handling | 80+ languages with locale variants | 30-50 languages | 10-20 major languages |

| Developer Experience | Interactive docs, code samples, live testing | API reference only | Basic documentation |

| Pricing Model | Pay-per-use with free credits | Monthly tiers + overage | Fixed monthly plans |

For most developers building professional applications, 1bit AI provides the optimal balance of advanced features and developer-friendly implementation. The emotional control and pacing intelligence reduce the need for extensive SSML markup, while the comprehensive multilingual support handles global use cases. The pay-per-use model with free starting credits makes it accessible for prototyping and scaling.

Experience the difference with 1bit AI's TTS API

Our API delivers studio-quality voiceovers with minimal configuration. The intelligent emotional and pacing systems understand context, reducing the need for manual SSML tuning. Start with free credits to test with your actual content—compare the naturalness against other solutions.

Create AI Voiceovers

FAQ

How do I make TTS sound more natural?

Implement strategic pauses using SSML break tags (300-800ms between sentences). Add prosody variations for emphasis—slow down key terms by 20-30%. Use emotional context markers appropriate to content type. Structure text with proper punctuation before API submission. Choose voice profiles matched to content (narration vs. dialogue). Test with actual listeners and iterate based on feedback.

What are SSML tags in text to speech?

SSML (Speech Synthesis Markup Language) tags are XML-based instructions that control how TTS engines interpret text. Key tags include: break for pauses, prosody for rate/pitch/volume control, phoneme for pronunciation, say-as for text interpretation (dates, numbers), and emotion for vocal expression. SSML enables precise control over pacing, emphasis, and naturalness that plain text cannot achieve.

How to add emotion to AI voice generator?

Use your API's emotion tags (like emotion="excited") at paragraph or sentence level. Combine with prosody adjustments—increase rate and pitch for excitement, decrease for seriousness. Implement emotional transitions between content sections. Choose voices optimized for emotional expression. Test different emotional intensities (mild vs. strong). Apply emotions contextually—different emotions for benefits vs. warnings vs. instructions.

Which TTS API is best for developers?

The best TTS API for developers offers comprehensive SSML support, emotional control, intelligent pacing, clear documentation, and flexible pricing. 1bit AI provides all these plus free starting credits for testing. Key developer features: interactive API playground, code samples in multiple languages, webhook support for async processing, detailed usage analytics, and responsive technical support for integration questions.

How to control pacing in voiceover API?

Use SSML prosody rate attributes for baseline speed (values from 0.5x to 2.0x). Insert break tags with duration values for pauses. Implement content-aware pacing—slower for complex information, faster for narrative. Use emphasis tags to slow specific words. For long content, vary pacing between sections to maintain engagement. Test different pacing profiles with sample audiences to find optimal settings.

Conclusion

Mastering TTS API best practices transforms robotic text-to-speech into engaging, natural voiceovers. By combining SSML structural control with emotional intelligence and strategic pacing, you can create voiceovers that captivate audiences across content types. Remember that naturalness comes from variation—in pacing, emotion, and emphasis. The most effective implementations test continuously with real listeners and iterate based on feedback. Whether you're building video content, educational materials, or interactive applications, these techniques will elevate your audio quality.

Start implementing these TTS API best practices today with a platform designed for natural voice generation. 1bit AI Text To Speech provides the tools and intelligence to create professional voiceovers without extensive manual tuning.